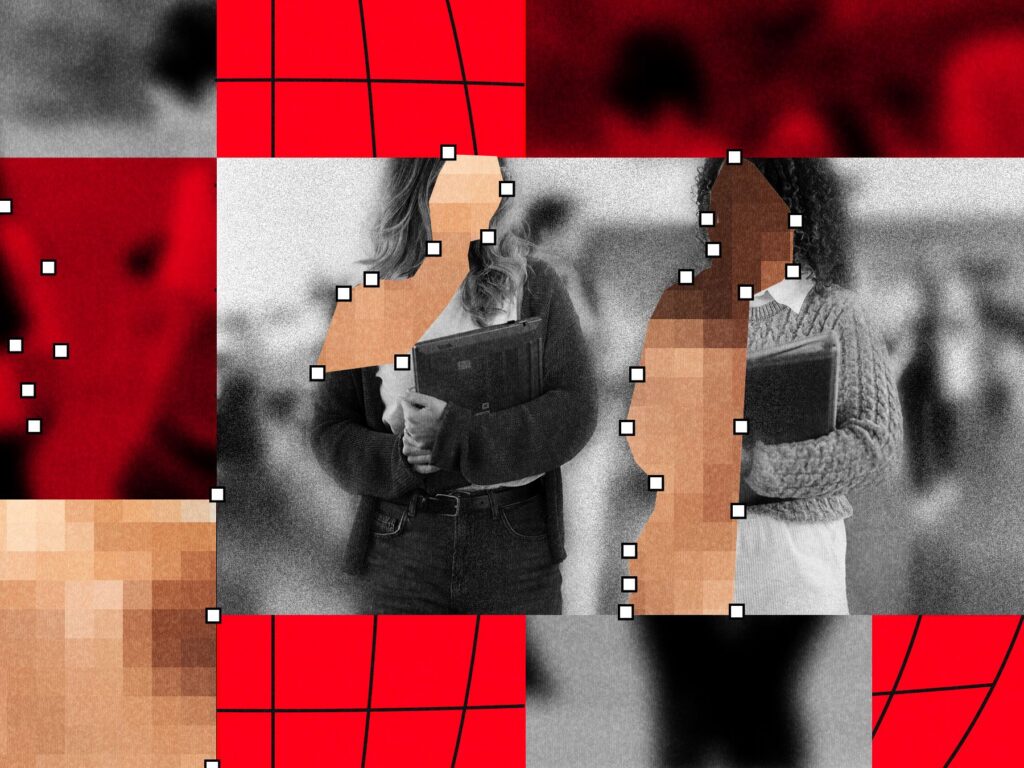

The Deepfake Nudes Crisis in Schools Is Much Worse Than You Thought

Summary

WIRED, in partnership with Indicator, analysed publicly reported incidents and found that AI-generated sexual deepfakes have affected nearly 90 schools and about 600 students worldwide, with cases reported in at least 28 countries since 2023. The phenomenon typically starts with social-media photos and cheap, accessible “nudify” tools that enable pupils—most often teenage boys—to create and share fake explicit images and videos of classmates and, in some cases, teachers.

The story highlights the scale, speed and accessibility that generative AI brings to a long-standing problem of sexual harassment: harms are severe for victims, school and law-enforcement responses are inconsistent, and the true prevalence is likely far higher than public reports suggest.

Key Points

- WIRED and Indicator identified nearly 90 schools and roughly 600 pupils affected by AI sexual deepfakes in public reporting; incidents span at least 28 countries since 2023.

- The typical pattern: photos scraped from social media are run through “nudify” apps—often by teenage boys—and shared across school networks via messaging apps and social platforms.

- Sexual deepfakes of minors are legally child sexual abuse material (CSAM); responses range from criminal charges to school suspensions, but many cases see weak or delayed action by authorities.

- Independent estimates (for example UNICEf and Thorn) suggest the real scale is far larger — UNICEF estimates about 1.2 million children had sexual deepfakes created of them last year.

- Motivations among teenagers vary: sexual curiosity, revenge, humiliation, dares and social control — not always sexual gratification.

- Schools are changing practices (dropping yearbook photos, limiting student images online) and some jurisdictions are moving to ban nudification apps or fast-track takedown rules for non-consensual intimate imagery.

- Experts say prevention requires training, clear policies, digital-forensics readiness and better support for victims — but many schools lack resources and awareness.

Context and relevance

This piece sits at the intersection of AI, child protection and school policy. As generative AI drops technical barriers, problems that once required technical skill become routine for adolescents with a phone. That amplifies existing gendered harms and places pressure on educators, police and lawmakers to catch up.

For anyone working in education, safeguarding, child protection, policymaking or platform safety, the article explains current patterns, legal implications and practical steps schools are taking — and why those steps may be insufficient without coordinated policy and faster platform action.

Why should I read this?

Because this isn’t some niche tech worry — it’s happening in schools near you, and the tools make it stupidly easy. Read this if you care about kids’ safety, sensible school policy or how AI is being weaponised in everyday life. It’s grim, it’s spreading, and adults are miles behind; the article saves you the legwork by laying out what’s happening, why it matters and what’s already being tried.

Source

Source: https://www.wired.com/story/deepfake-nudify-schools-global-crisis/