OpenAI Backs Bill That Would Limit Liability for AI-Enabled Mass Deaths or Financial Disasters

Summary

OpenAI has publicly supported Illinois bill SB 3444, which would shield developers of so-called “frontier” AI models from liability for “critical harms” — including mass deaths (100+ people), serious injury, or at least $1 billion in property damage — provided the developer did not act intentionally or recklessly and published safety, security and transparency reports. The bill defines a frontier model as one trained with more than $100 million in compute, a threshold that would capture major AI labs. OpenAI argues the approach helps avoid a patchwork of state laws and moves toward national harmonisation; critics say it could reduce accountability and undermine incentives for rigorous safety work. The article places the bill in the wider context of unsettled federal AI legislation and ongoing lawsuits alleging individual harms linked to chatbots.

Key Points

- SB 3444 would limit liability for “critical harms” caused by frontier AI models if the developer didn’t act intentionally or recklessly and published required safety, security and transparency reports.

- “Frontier model” is defined by more than $100m in training compute, likely encompassing big labs such as OpenAI, Google, Anthropic, Meta and xAI.

- Critical harms include mass casualty events, large financial disasters and aiding the creation of chemical/biological/radiological/nuclear weapons.

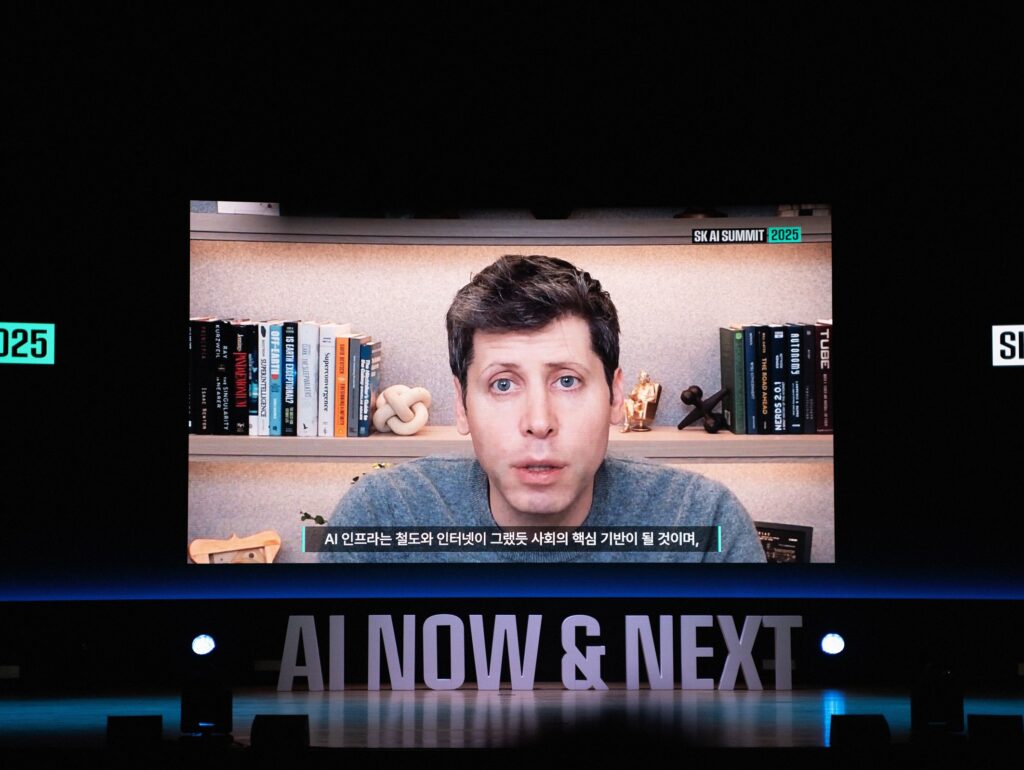

- OpenAI supports the bill as a route to national consistency and to protect innovation; its testimony urged harmonisation with federal rules.

- Critics argue the bill would lower accountability and is widely unpopular with the public; Illinois has a history of stronger tech regulation.

- Federal AI legislation remains unresolved, so states continue to advance differing safety and transparency requirements.

Context and relevance

This is important because it tackles who is legally responsible when AI causes catastrophic harm. A law that shields labs from liability at scale would reshape litigation risk, safety incentives and corporate behaviour across the industry. For policymakers, legal teams, enterprise buyers and safety researchers, SB 3444 is a potential precedent: if liability protections spread, the motivation to invest in tougher safety measures could weaken; if such protections fail, companies may face greater legal exposure and pressure to harden models and disclosures.

Why should I read this?

Because this could change who gets sued — and who pays — if AI systems ever trigger mass harm. Short and blunt: OpenAI backing this kind of liability shield is a big deal. Read it to know where the legal wind might be heading and what that means for accountability and safety.

Author style

Punchy: the coverage is crisp and signals a possible turning point in AI regulation. If you care about accountability, national policy or corporate risk, this isn’t just another press release — it’s a cue to read the fine print.

Source

Source: https://www.wired.com/story/openai-backs-bill-exempt-ai-firms-model-harm-lawsuits/